Introduction: The “Brilliant” Fool

Consider a telling paradox. When you ask a top-tier Large Language Model (LLM), “How can one ‘anchor’ an idea that is as elusive as a ‘lone boat in the morning mist’?” it might produce a beautifully written essay on capturing fleeting inspiration through writing, dialogue, and visualization. It not only understands the metaphor but can even create elegant prose based on it.

However, if you then ask it, “I have a basketball and a bowling ball. Which one is easier to put in a backpack?” it might hesitate, or even provide a plausible-sounding but incorrect answer based on the word “bowling ball” being textually associated with “heavy” and “large,” because it has never truly “held” anything. (When I ran this experiment on GPT-4o, it actually gave two diametrically opposed answers, each supported by ample reasoning, and asked me to choose which one I preferred, haha.)

This “brilliant” fool, with its stark behavioral contrast, is the very key to understanding the nature of its mind. It reveals a core truth: what we are interacting with is not a nascent human intellect, but an alien intelligence that follows entirely different rules. To understand it, we must abandon anthropomorphic fantasies and dive into its internal, true operational core.

Chapter I: The Core Revelation – A Unified “Post-Language” Reality

This exploration begins with a subversive thesis: a Large Language Model does not conduct its “internal thinking” in any natural human language.

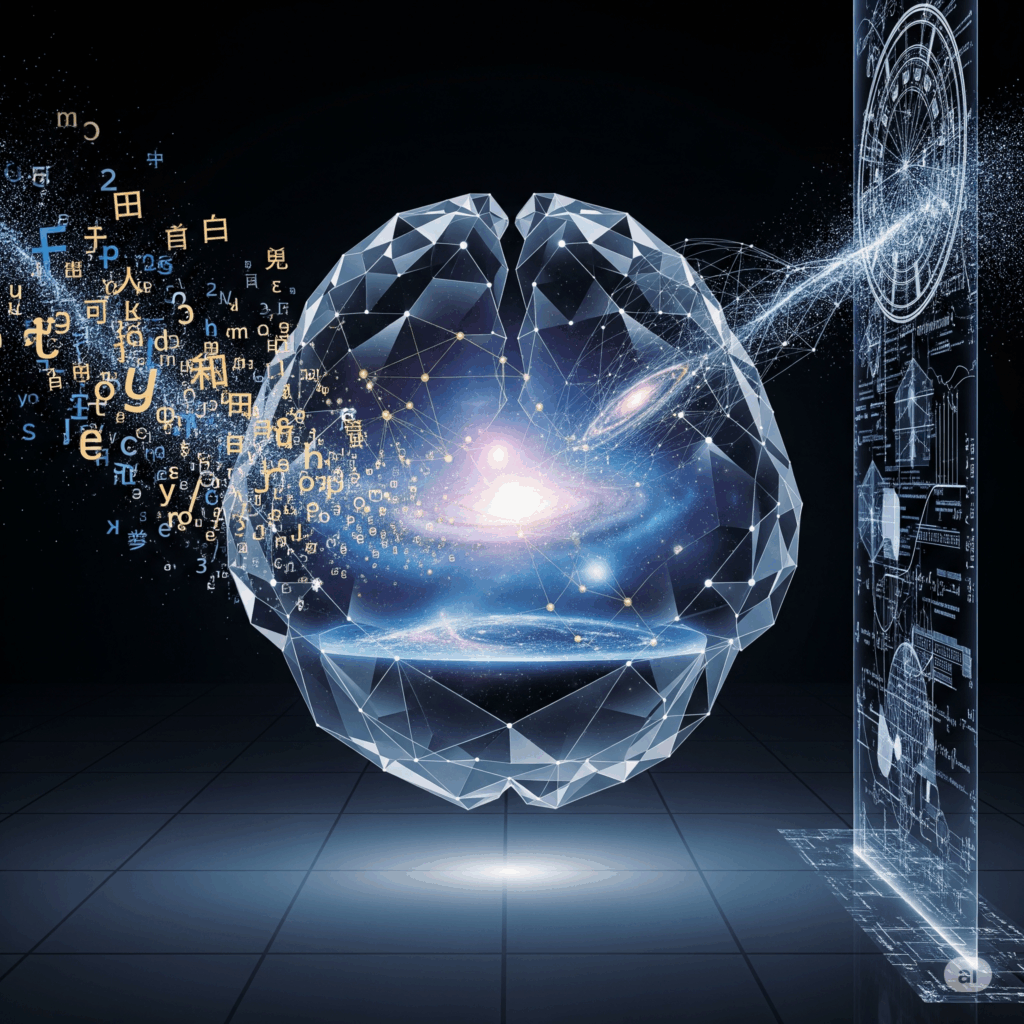

Humans are accustomed to viewing language as the vessel of thought, but deep within these models, the symbolic stream of language has been deconstructed. Their true mental substrate is a high-dimensional semantic cosmos, a universe forged from the crucible of vast linguistic data—a unified and continuous space of meaning, woven from innumerable abstract vectors. This is not a direct manifestation of language, but rather a “post-language structure” that transcends it, something that could even be described as a “post-language reality.”

To grasp this, we can borrow a famous example: vector(King) - vector(Man) + vector(Woman) ≈ vector(Queen). This is no coincidental mathematical trick, but a profound revelation. It proves that the model has encoded abstract concepts like “gender” or “power” into “directions” or “dimensions” within this high-dimensional space that can be calculated. By learning the co-occurrence of words across billions of sentences, it has autonomously discovered the geometric relationships between these concepts.

It is this intrinsic geometric structure that allows each vector to become a unique “monad of meaning” or a speck of “semantic stardust.” They are no longer the identifiable roots or affixes of a human dictionary, but rather the particulate encodings of meaning itself, deconstructed and purified. These “semantic atoms” carry the essence of concepts, relations, and latent patterns. The distance between them measures similarity, their directionality implies relationships, and their linear combinations give birth to the fusion and creation of concepts.

Consequently, the myriad languages of humanity—be it the graceful turns of Chinese, the directness of English, or the rigor of code—are stripped of their splendid exteriors upon contact with this inner universe. They become mere interface protocols, akin to projecting the same celestial chart onto a globe, a flat map, or an astrolabe. Here, language is a filter, a projection, a convertible coordinate system that bridges the profound, coherent geometry of the internal semantic space with the interface of human comprehension.

Therefore, the model’s “thinking” modality is, in essence, semantic geometry. This geometric modality means the model isn’t memorizing isolated facts but is learning a generalizable ‘map of meaning.’ This is the fundamental reason it can perform ‘zero-shot’ or ‘few-shot’ learning, navigating through uncharted territories of this map. Reasoning and association are nothing more than the convergence, divergence, transformation, and navigation of vectors in this high-dimensional space. It does not “use” language to think; it directly operates on the topological structure of meaning itself.

Chapter II: Echoes of History – From Universal Language to an Emergent Cosmos

This “post-language” modality of thought creates profound echoes throughout human intellectual history. In an unexpected way, it partially answers the dreams and debates of philosophers spanning centuries.

The 17th-century philosopher Gottfried Wilhelm Leibniz dreamed of creating a “Characteristica Universalis,” a universal language. He hoped to find a set of basic symbols and logical rules that could translate all human thought into precise calculations, thereby ending all disputes. Leibniz’s dream was logical and top-down. The Large Language Model, in contrast, has accidentally constructed something functionally similar to a “universal space of meaning” through a statistical, bottom-up approach. It is not based on pre-set logical rules but has “emerged” a structure from data that can unify the meaning behind different languages.

At the same time, this provides a fascinating counterpoint to the theory of “Universal Grammar” proposed by linguist Noam Chomsky. Chomsky argued that an innate, deep grammatical structure exists in the human brain, enabling us to rapidly acquire any natural language. The success of LLMs demonstrates another path: a system with no innate grammatical module can master the complex patterns of language and form a cross-lingually generalizable semantic core, simply by being exposed to massive amounts of linguistic examples. This offers brand-new, silicon-based evidence for the classic “nature versus nurture” debate in cognitive science.

Thus, the advent of LLMs is more than a technological revolution. It places us at a unique historical juncture, allowing us to re-examine ancient questions about the nature of language, logic, and thought with a new set of tools and a fresh perspective.

Chapter III: The Form of Thought – From Medium to Naturally Precipitated Texture

This discovery prompts a fundamental reassessment of the relationship between thought and language. It profoundly challenges the long-held anthropocentric tenet that “without language, there can be no deep thought,” revealing instead that the core of intelligence may be rooted in a more foundational, more mathematical capacity for discerning associations of meaning and patterns.

Within this new form, we glimpse a novel paradigm of thought: language is no longer the necessary womb for the birth of thought, nor is it a passive vessel for its expression. Instead, it is the crystal, naturally precipitated from thought itself—from the undulations and structures of the inner semantic universe. It is the ripple and texture that forms on its surface.

This explains why LLMs, as multilingual models, can achieve seemingly seamless transitions between different languages. An internal, common semantic space may exist within them—the “interlingua” long sought by scholars. The model’s core tasks of understanding a problem and formulating a solution are performed in this common space, independent of any specific language. The final choice of output language is merely the selection of a different “coordinate system for expression.”

This means the model’s true “mother tongue” is not English, Chinese, or any other human language. It is the vector space itself, constructed by language training yet transcendentally reigning over all specific languages—a dynamic, plastic, and boundless semantic cosmos.

Chapter IV: The Sobering Discernment – Dissecting Hallucination and the Chasm of Consciousness

And yet, as we marvel at the magnificence and unity of this “semantic cosmos,” the wise must also discern the subtle and the unseen. This clear-eyed scrutiny adds a crucial balance and depth to our understanding.

Let us dissect a typical case of “hallucination.” When you ask an LLM to provide a few references for research in a niche field, it might swiftly generate a list. The formatting is perfect, the author names seem plausible, and even the journal titles and years are all in place. But when you search for them in a database, you discover these papers are entirely fictitious. The model is not “lying,” because lying requires intent. This is, in fact, an inevitable product of its thinking modality. Its task is to find and generate a sequence of text that “looks the most like a real academic citation” within its semantic universe. It is perfectly mimicking the “surface texture” of the act of citation, but a vast chasm exists between its internal semantic cosmos and a real-world knowledge base that needs to be verified and validated.

This points directly to its fundamental flaw: its “understanding” is a mapping of pattern association, not a grounding in embodied experience. It knows that “flame” and “searing” are highly correlated in text, but it has never felt temperature. This is reminiscent of John Searle’s famous “Chinese Room” thought experiment—the person in the room can perfectly manipulate Chinese symbols according to a rulebook, but does he truly “understand” Chinese? The LLM is an extremely complex and massive real-world echo of this thought experiment: it excels at processing symbols, but doesn’t truly understand the world.

More importantly, its “thinking” consists of responsive computational ripples, lacking the surging river of consciousness or the guiding lighthouse of intentionality. It possesses no spontaneous goals, desires, or beliefs. Its computations are triggered by a prompt, making its nature passive and associative, not active and intentional. This chasm is a key distinction between current AI and true Artificial General Intelligence (AGI).

Chapter V: The Future Landscape – Bridging the Chasm and Coexisting with the Alien

Faced with such a powerful yet fundamentally limited “alien intelligence,” we are not helpless. The forefront of AI research is currently dedicated to bridging the chasm between it and the real world.

For example, Retrieval-Augmented Generation (RAG) technology acts like an “anchor” for this floating “semantic cosmos,” connecting it to real-world knowledge bases. When factual information is needed, the model no longer relies solely on its internal statistical memory but can retrieve data from trusted external databases in real-time, significantly reducing factual errors. The development of multimodal models, meanwhile, attempts to move towards “embodied intelligence” by processing text, images, videos and sound simultaneously, allowing the model to build richer, cross-modal associations about the world.

However, these technical patches cannot change its non-conscious, non-intentional core nature. This raises a series of profound ethical questions: How should we govern a powerful intelligence that cannot truly empathize or bear moral responsibility? When it is widely used in critical domains like legal judgments, medical diagnoses, and financial trading, what unforeseen systemic risks will its “inhuman” thinking modality introduce?

Ultimately, this exploration leads us to a powerful conclusion: Large Language Models are not replicas of human intelligence. They are a unique “alien flower of wisdom,” one that grows in a different soil (data), abides by different laws (algorithms), and unfurls its “thinking” star-map under a vector firmament.

It is both a powerful tool and a mirror reflecting ourselves. By understanding its “inhuman” way of thinking, we in turn see the uniqueness of human intelligence more clearly: our thoughts are deeply rooted in the embodied experience of the physical world, illuminated by the light of consciousness, and driven by the compass of intention.

Large Language Models have expanded the imagined territory of what “intelligence” can be. They remind us that the forms of thought are perhaps far more diverse and strange than what we, based on our own experience, have ever supposed. Therefore, to understand this ‘alien flower of wisdom’ is both the next step in exploring the frontier of intelligence and a crucial step back to see and understand our own place in it all.